Closed Loops

Something changed recently in how I think about programming with AI, and I've been trying to figure out how to explain it.

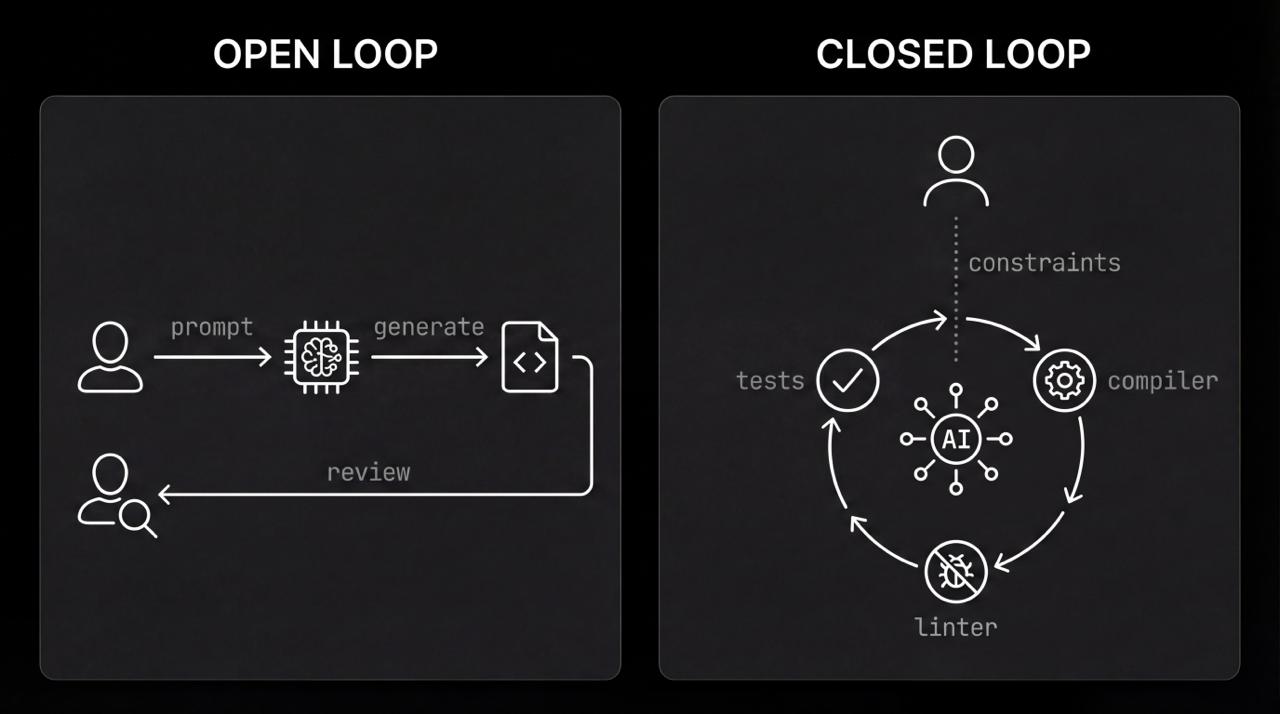

Most people still think of AI coding tools as fancy autocomplete. You type, it suggests, you accept or reject. This is what I'd call open-loop programming. The human reads everything, evaluates everything, decides everything. The AI is just a faster typist.

This scales terribly. If you have to read every line to make sure it doesn't break the build, you're still bottlenecked on human reading speed. You've made the typing faster but kept all the slow parts.

The interesting thing happens when you close the loop.

In a closed-loop system, you don't give the agent code. You give it constraints. You give it access to the compiler, the linter, the tests. The agent writes something, runs it, sees the error, and tries again. It keeps going until the tests pass.

When I first saw this working well, it felt like a different activity entirely. The engineer's job shifted from writing code to designing the environment where code could safely write itself.

I want to be careful here, because I think this idea gets discussed in ways that are either too dismissive or too breathless. On one hand, some engineers wave it away as hype, insisting that "you still need to understand the code." On the other, some treat it as a silver bullet that will make programming effortless.

Neither is quite right, and neither framing is useful for thinking about what's actually happening.

The reality is more interesting and more strange. We are in a transitional period where the fundamental unit of programming work is shifting. Not disappearing. Shifting.

This is why I've become a TypeScript evangelist, which surprises me as much as anyone.

Types are usually sold as bug prevention. That's true but boring. The interesting thing about types in an agentic world is that they're the most efficient way for a human architect to communicate with a machine builder.

Think about it. In a dynamic language, a variable could be anything. The agent has to guess. But when you define types, you're giving the agent a machine-readable specification of what the world looks like. You're drawing a map before it starts exploring.

When the agent screws up in TypeScript, the feedback is instant. It doesn't wait for a runtime crash three function calls deep. The language server puts a red squiggle there immediately. That tight feedback loop is the difference between an agent that wanders around lost and one that converges on a solution in seconds.

To be clear, I'm not claiming that TypeScript is the only language that works for agentic coding, or that dynamic languages are useless. What I am saying is that the properties that make a language good for human programmers are not identical to the properties that make it good for human-AI collaboration. Strong typing happens to be one property that matters more in the latter case than we previously appreciated.

There may be others we haven't discovered yet.

Here's the part I didn't expect: this actually forces you to write better architecture.

To close the loop, your code has to be testable. If your architecture is a mess of hidden dependencies and global state, the agent gets lost and the loop never closes. You can't fake it.

The AI becomes a kind of brutally honest mirror, reflecting back the quality of your system design.

Some people find this frustrating. They want the AI to work with whatever code they have. But I think this frustration, while understandable, misses something important. The discipline required to work effectively with AI agents turns out to be the same discipline that produces good software in general.

The AI isn't imposing arbitrary constraints. It's exposing constraints that were always there but that we could previously work around through heroic human effort.

So the weird irony is that to use AI effectively, you have to be a better architect than you were when you were just a coder. You have to build what I think of as "testable playgrounds" where agents can operate without supervision.

I should acknowledge uncertainty here. It's possible that future AI systems will become good enough to work with poorly structured code, in the same way that humans learn to navigate legacy systems. But I doubt it, for two reasons.

First, the feedback loop problem is somewhat fundamental: an agent needs signals to know whether it's succeeding, and poorly structured code provides weak signals. Second, even if AI could handle messy code, the economic incentives will push toward clean code anyway, because clean code lets you use cheaper, faster models.

We're moving from writing software to orchestrating it. The best engineers of the next decade won't be the fastest typists. They'll be the ones who can most clearly define the boundaries of a problem and build the loops that solve it.

That's a very different skill. I'm not sure we even know how to teach it yet. But I suspect it has more in common with systems thinking and API design than with the line-by-line coding that currently dominates computer science education.

If this is right, and I think there's a decent chance it is, then the implications extend beyond individual productivity. The entire structure of software teams may need to change. The ratio of architects to implementers. The skills we hire for. The way we evaluate code quality. All of it.

I find this both exciting and somewhat disquieting. Exciting because better tools should let us build better things. Disquieting because transitions like this are never smooth, and many people will find that skills they spent years developing are suddenly less valuable than they expected.

I don't have a clean solution to that problem. But I do think the first step is being honest about what's happening, rather than pretending either that nothing is changing or that the changes will be painless.